This is yet another post on Unraid’s poor SMB performance, but I think I narrowed down the cause of the problem to the Unraid FUSE filesystem. I discovered this about 2 months ago, but with COVID-19 and no kids weekend sporting event duties, I have some time to post.

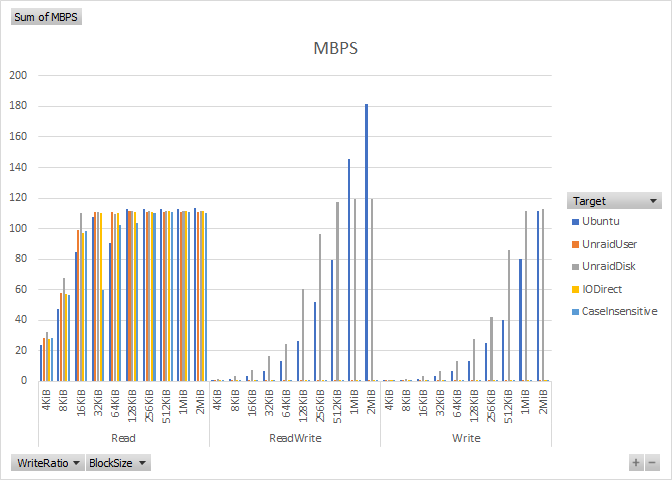

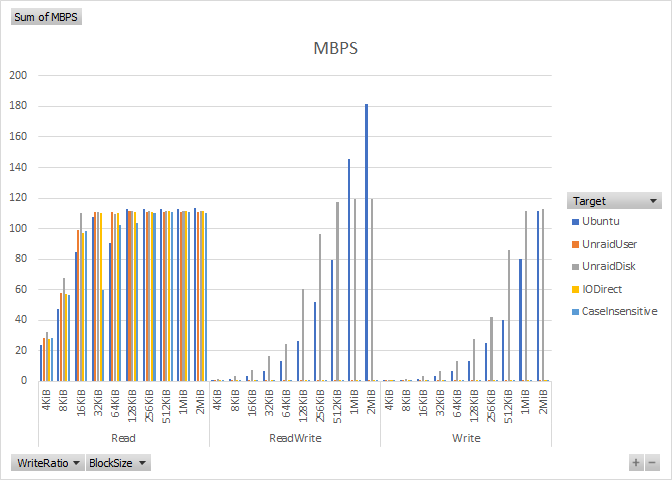

In this round of testing I compared the performance of “User” shares vs. “Disk” shares. An Unraid “User” share is a volume backed by Unraid’s FUSE filesystem, while a “Disk” share is a volume directly backed by the disk’s native filesystem.

Per suggestions from Unraid I also tested enabling DirectIO and disabling SMB case sensitivity.

As before, I used my DiskSpeedTest utility to automate the testing.

The case insensitive SMB and DirectIO options made no discernable difference.

But we can see that the performance of disk shares are near the same performance we get from Ubuntu. This means the performance problem is caused by the Unraid FUSE code affecting all user shares.

One may expect some performance degradation due to the FUSE code needing to perform disk parity operations, but this level of impact is unacceptable compared to other software based RAID systems, and worse is that the test was performed on the SSD cache volume where no parity computation is required.

The Unraid FUSE code is proprietary, so code inspection is not possible, but I suspect the code path is less than optimized. In my experience the performance and quality demands of filesystem code requires extremely competent and diligent developers. Other than the obvious performance degradation, I’ll offer two other examples of questionable code behavior: 1) All IO is halted while waiting for a disk to spin up, even if the disk being spun up has nothing to do with servicing the IO backed by another disk. This could be an overly simplified locking or synchronization model, instead of an IO path based locking model. 2) The cache volume is not backed by parity, but IO performance is still severely degraded. This directly shows the performance degradation caused by code not IO, and could be avoided by direct IO passthrough, or file handle remapping as done in overlay filesystems. But, I’m really just speculating, other than observation I have no substantiation.

I really do like the flexibility of Unraid as an all-in-one storage plus docker plus virtualization host. But the “proprietary” Unraid RAID implementation is showing to be the weakest link, not just in performance but also being limited to 28 data + 2 parity drives. I am leaning towards adding my support to the growing number of users that would like to see native ZFS support in Unraid.

Unfortunately still no word from Unraid as to a performance fix.

1 Comment