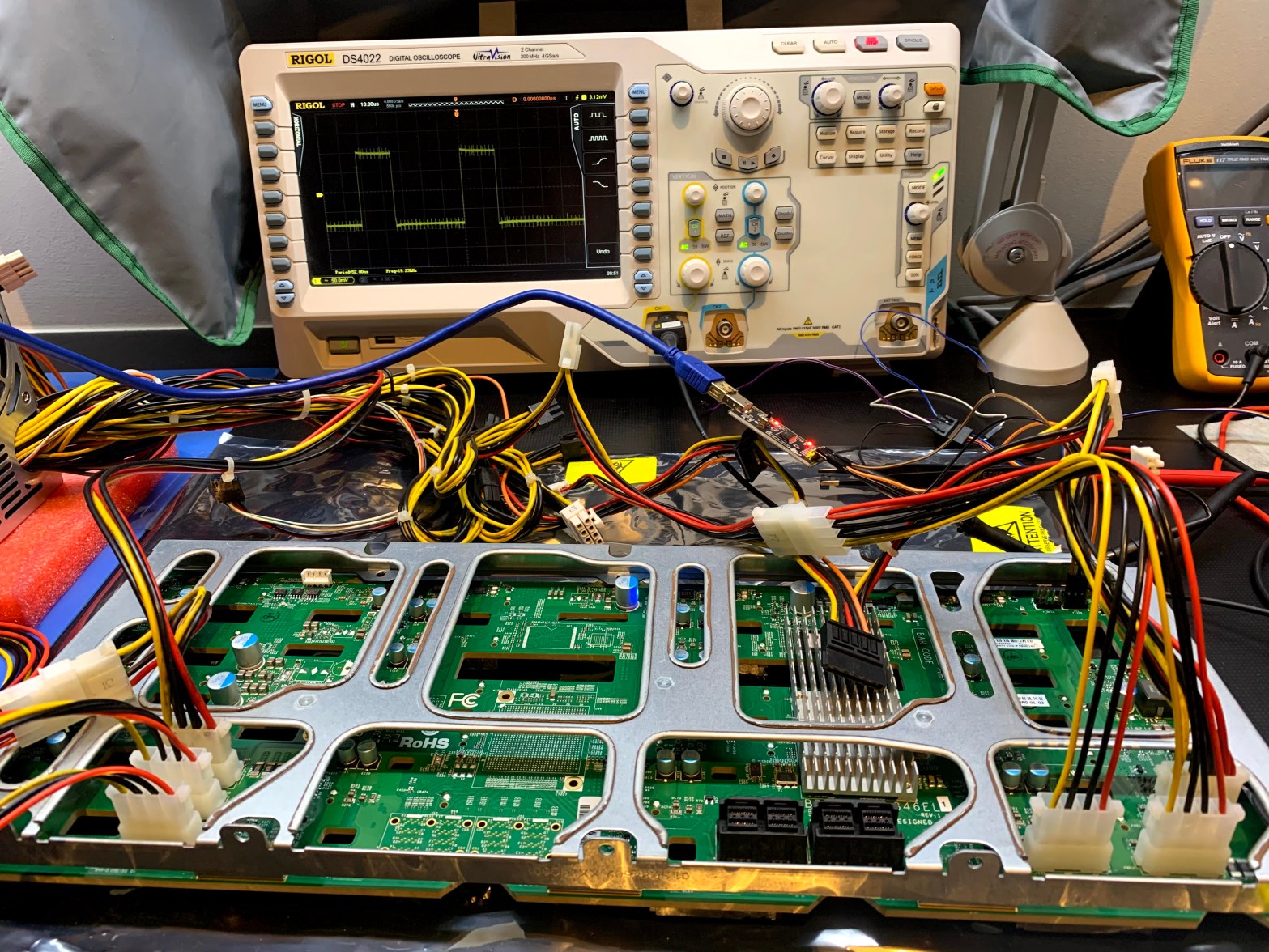

In a previous adventure I replaced an Adaptec HBA with a LSI SAS3 HBA, and the chassis drive bay LED’s stopped working. I suspect the LSI card does not play nice with the SGPIO sideband controller, and I decided to replace the chassis with one similar to my SC846 chassis, where the LSI card andContinue reading “Recovering the Firmware on a Supermicro BPN-SAS3-846EL1 Backplane”

Tag Archives: lsi

Unraid repeat parity errors on reboot

This post started with a quick experiment, but after hardware incompatibilities forced me to swap SSD drives, and subsequently losing a data volume, it turned into a much bigger effort. My two Unraid servers have been running nonstop without any issues for many months, last I looked the uptime on v6.7.2 was around 240 days.Continue reading “Unraid repeat parity errors on reboot”

LSI turns their back on Green

I previously blogged here and here on my research into finding a power saving RAID controllers. I have been using LSI MegaRAID SAS 9280-4i4e controllers in my Windows 7 workstations and LSI MegaRAID SAS 9280-8e controllers Windows Server 2008 R2 servers. These controllers work great, my workstations go to sleep and wake up, and inContinue reading “LSI turns their back on Green”

Storage Spaces Leaves Me Empty

I was very intrigued when I found out about Storage Spaces and ReFS being introduced in Windows Server 2012 and Windows 8. But now that I’ve spent some time with it, I’m left disappointed, and I will not be trusting my precious data with either of these features, just yet. Microsoft publicly announced StorageContinue reading “Storage Spaces Leaves Me Empty”

Windows 8 Install Hangs Booting From LSI 2308 SAS Controller

I’ve previously posted about problems installing Windows 8 on SuperMicro machines, and that SuperMicro released a Beta BIOS that solved the install problems. I’ve since run into two more problems; the install hanging when booting of the LSI 2038 SAS controller, and a BSOD when using a Quadro 5000 video card (more on that inContinue reading “Windows 8 Install Hangs Booting From LSI 2308 SAS Controller”

Hitachi Ultrastar and Seagate Barracude LP 2TB drives

In my previous post I talked about Western Digital RE4-GP 2TB drive problems. In this post I present my test results for 2TB drives from Seagate and Hitachi. The test setup is the same as for the RE4-GP testing, except that I only tested 4 drives from each manufacturer. Unlike the enterprise class WD RE4-GPContinue reading “Hitachi Ultrastar and Seagate Barracude LP 2TB drives”

Western Digital RE4-GP 2TB Drive Problems

In my previous two posts I described my research into the power saving features of various enterprise class RAID controllers. In this post I detail the results of my testing of the Western Digital RE4-GP enterprise class “green” drives when used with hardware RAID controllers from Adaptec, Areca, and LSI. To summarize, the RE4-GP driveContinue reading “Western Digital RE4-GP 2TB Drive Problems”

Power Saving RAID Controller (Continued)

This post continues from my last post on power saving RAID controllers. It turns out the Adaptec 5 series controller are not that workstation friendly. I was testing with Western Digital drives; 1TB Caviar Black WD1001FALS, 2TB Caviar Green WD20EADS, and 1TB RE3 WD1002FBYS. I also wanted to test with the new 2TB RE4-GP WD2002FYPSContinue reading “Power Saving RAID Controller (Continued)”